CloudSigma allows customers to add GPUs to their virtual machines and use high-performance, cost-effective computing that can meet the most demanding workloads. The heart of CloudSigma’s GPU offering is the NVIDIA A100 Tensor Core GPU optimised for HPC, AI and data analytics. The A100 outperforms the NVIDIA TESLA V100 and has new features that AI applications can take full advantage of. We allow customers to build NVIDIA A100 easily optimized VMs in passthrough mode so VM instances have direct control over the GPU/s and their built-in memory.

Use cases

The growth of compute-intensive applications running in the cloud has driven the recent explosion of GPU-accelerated cloud computing. These applications include AI deep learning training and inference, data analytics, scientific computing, genomics, graphics rendering, and gaming, to name just a few. From scaling-up AI training and scientific computing to scaling out inference applications to enabling real-time conversational AI, GPUs provide the necessary power to accelerate numerous complex and unpredictable workloads running in the cloud.

The NVIDIA A100 Tensor Core GPU represents a giant leap forward, delivering unprecedented acceleration for AI, data analytics, and HPC at every scale. Powered by the NVIDIA Ampere Architecture, the A100 provides up to 20X higher performance than the previous generation. CloudSigma makes the 80GB memory version available, the world’s fastest bandwidth at over 2 terabytes per second (TB/s) to run the largest models and datasets.

NVIDIA GPUs are among the leading computational engines powering AI by providing significant speedups for AI training and inference workloads. In addition, NVIDIA GPUs accelerate many types of HPC and data analytics applications and systems, turning data into insights.

AI and HPC

Train complex machine learning models faster and more efficiently with GPU acceleration. Tackle data-intensive tasks and achieve breakthroughs in AI-driven innovation. NVIDIA AI Enterprise is an end-to-end, cloud-native suite of AI and data analytics software optimized to enable any organization to use AI. It’s certified to deploy on the public cloud and includes global enterprise support to keep AI projects on track. The A100 allows researchers to rapidly deliver real-world results and deploy solutions into production at scale.

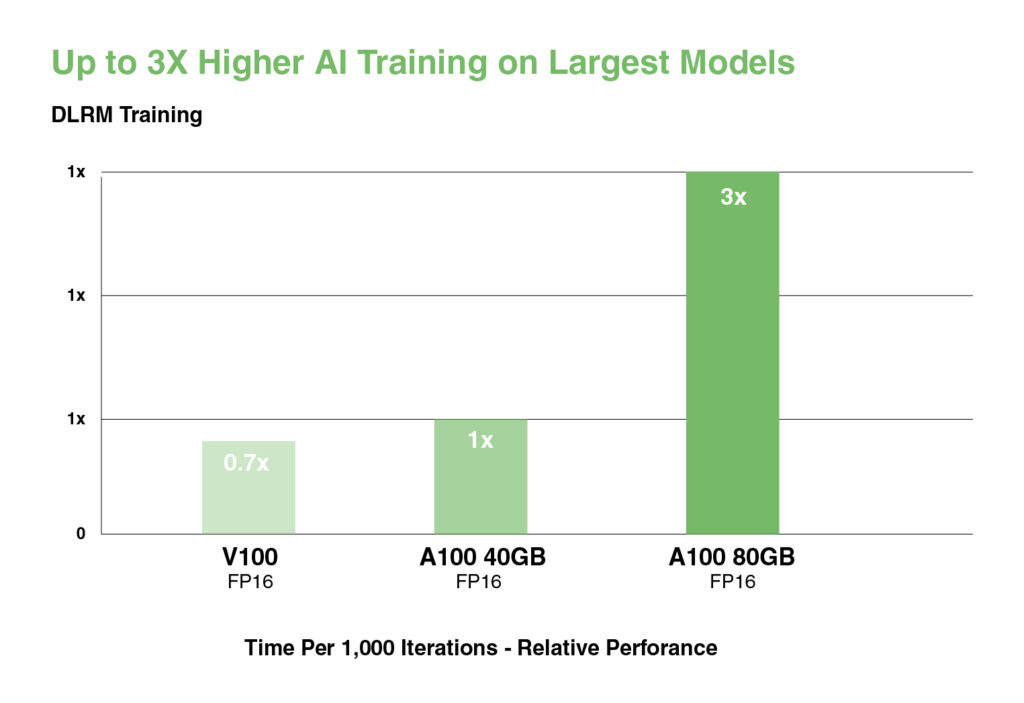

DEEP LEARNING TRAINING

Training AI models requires massive computing power and scalability. NVIDIA A100 Tensor Cores with Tensor Float (TF32) provide up to 20X higher performance over the NVIDIA Volta with zero code changes and an additional 2X boost with automatic mixed precision and FP16.

A training workload like BERT can be solved at scale in under a minute by 2,048 A100 GPUs, a world record for time to solution.

For the largest models with massive data tables like deep learning recommendation models (DLRM), A100 80GB reaches up to 1.3 TB of unified memory per node and delivers up to a 3X throughput increase over A100 40GB.

NVIDIA’s leadership in MLPerf, setting multiple performance records in the industry-wide benchmark for AI training.

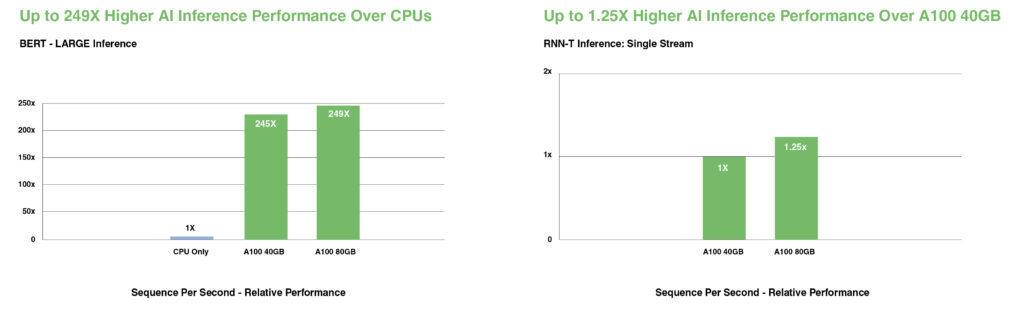

DEEP LEARNING INFERENCE

A100 introduces groundbreaking features to optimize inference workloads. It accelerates a full range of precision from FP32 to INT4. Multi-Instance GPU (MIG) technology lets multiple networks operate simultaneously on a single A100 for optimal compute resource utilisation. And structural sparsity support delivers up to 2X more performance on top of A100’s other inference performance gains.

On state-of-the-art conversational AI models like BERT, A100 accelerates inference throughput up to 249X over CPUs.

On the most complex models that are batch-size constrained, like RNN-T for automatic speech recognition, A100 80GB’s increased memory capacity doubles the size of each MIG and delivers up to 1.25X higher throughput over A100 40GB.

NVIDIA’s market-leading performance was demonstrated in MLPerf Inference. A100 brings 20X more performance to extend that leadership further.

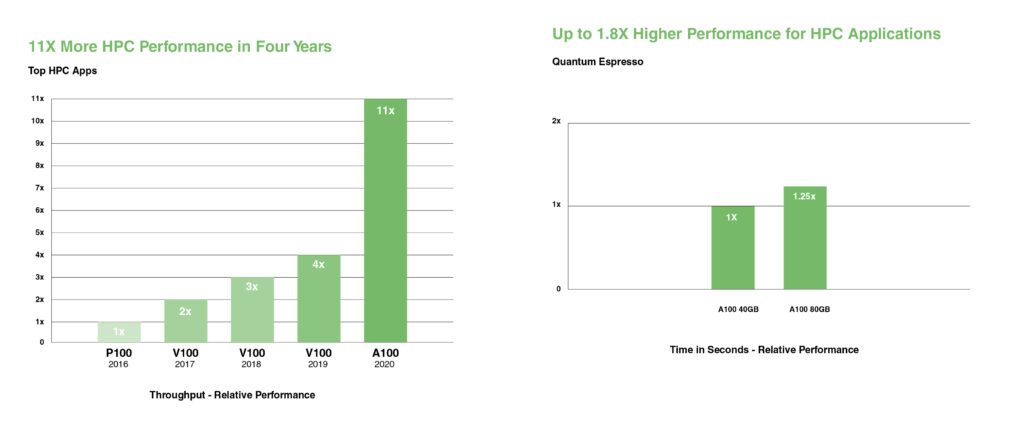

HIGH-PERFORMANCE COMPUTING

To unlock next-generation discoveries, scientists look to simulations to understand the world around us better.

NVIDIA A100 introduces double precision Tensor Cores to deliver the biggest leap in HPC performance since the introduction of GPUs. With 80GB of the fastest GPU memory, researchers can reduce a 10-hour, double-precision simulation to under four hours on A100. HPC applications can leverage TF32 to achieve up to 11X higher throughput for single-precision, dense matrix-multiply operations.

For the HPC applications with the largest datasets, A100 80GB’s additional memory delivers up to a 2X throughput increase with Quantum Espresso, a materials simulation. This massive memory and unprecedented memory bandwidth make the A100 80GB the ideal platform for next-generation workloads.

HIGH-PERFORMANCE DATA ANALYTICS

Data scientists need to be able to analyze, visualize, and turn massive datasets into insights. But scale-out solutions are often bogged down by datasets scattered across multiple servers.

Accelerated servers with A100 provide the needed compute power—massive memory, over 2 TB/sec of memory bandwidth, and scalability with NVIDIA® NVLink® and NVSwitch™ —to tackle these workloads. Combined with InfiniBand, NVIDIA Magnum IO™ and the RAPIDS™ suite of open-source libraries, including the RAPIDS Accelerator for Apache Spark for GPU-accelerated data analytics, the NVIDIA data center platform accelerates these huge workloads at unprecedented levels of performance and efficiency.

On a big data analytics benchmark, A100 80GB delivered insights with a 2X increase over A100 40GB, making it ideally suited for emerging workloads with exploding dataset sizes.

SCIENTIFIC SIMULATIONS: Accelerate scientific research and simulations, enabling faster insights and discoveries in physics, chemistry, and environmental science.

MEDIA AND ENTERTAINMENT: Render high-resolution graphics, videos, and animations with lightning speed. Deliver exceptional visual experiences to your audience without compromising on quality.

FINANCIAL MODELING: Analyze vast datasets and perform complex financial modelling with unmatched speed, providing critical insights for informed decision-making.

- CloudSigma to Make Waves at CloudFest 2024: Europe’s Premier Cloud Computing Event - February 29, 2024

- How CloudSigma is Preparing for NIS 2 Directive to Strengthen Europe’s Cybersecurity - September 4, 2023

- CloudSigma Expands Cloud Infrastructure with New Location in Cardiff, UK, in Collaboration with Centerprice - August 17, 2023

- CloudSigma Unveils Groundbreaking GPU-as-a-Service, Redefining Cloud Computing Possibilities - August 16, 2023

- CloudSigma GPU-as-a-Service - August 16, 2023